Explainable AI for End-of-Line Inspection

An advanced Computer Vision system designed to solve the ‘Black Box’ problem in automated manufacturing lines.

Overview

In safety-critical production environments, it is not enough for an AI to flag a defect; quality engineers need to know why a part was rejected. This project implements state-of-the-art Explainable AI (XAI) techniques to provide heatmaps and feature-importance visualizations for an automated screw-inspection assembly.

📊 XAI Visualization Methods

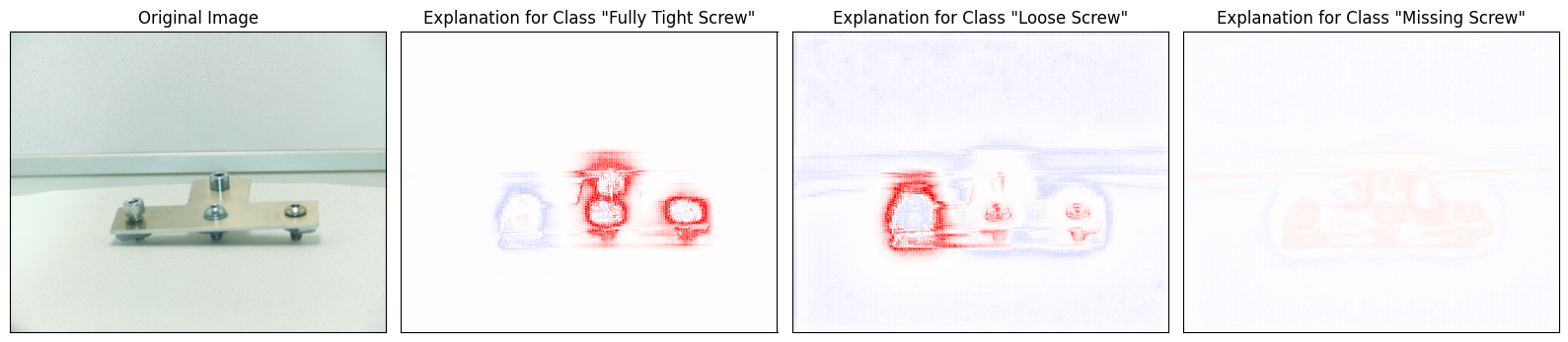

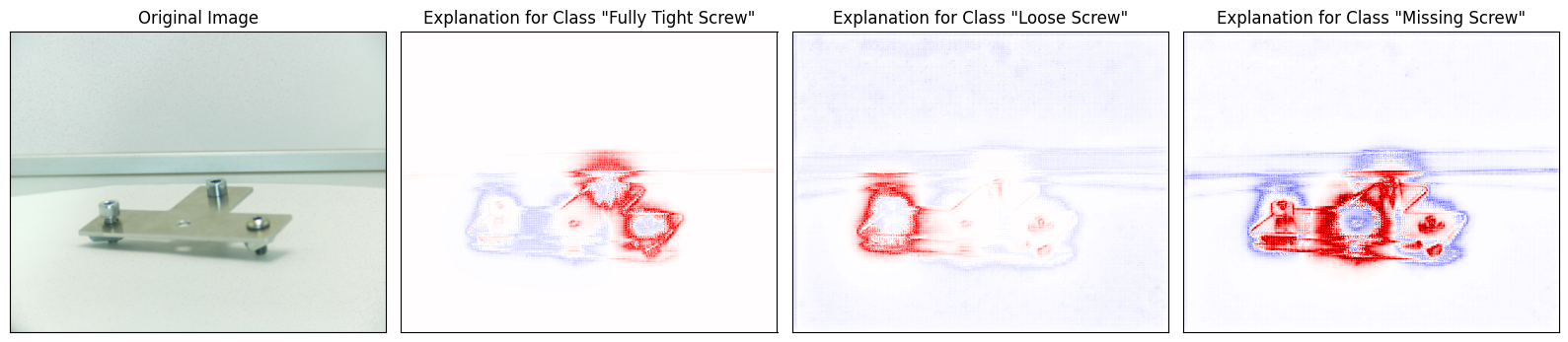

1. Layer-wise Relevance Propagation (LRP)

LRP identifies which pixels contributed most to a specific class prediction.

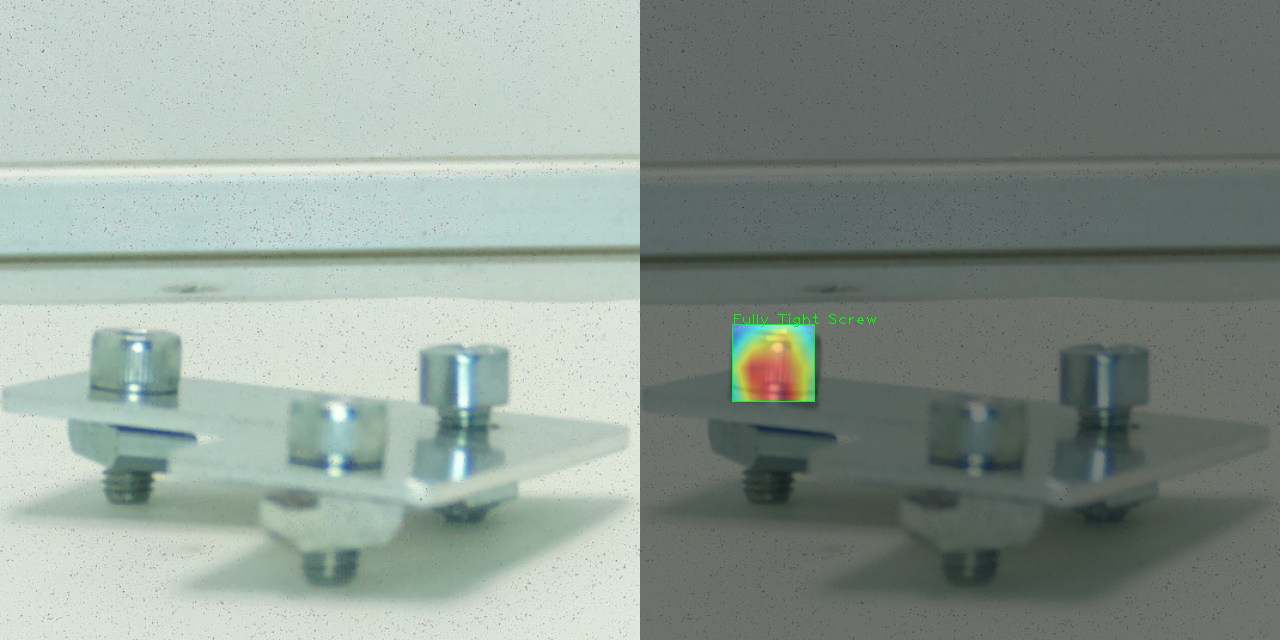

2. Grad-CAM Activation

Grad-CAM visualizes the “attention” of the final convolutional layers.

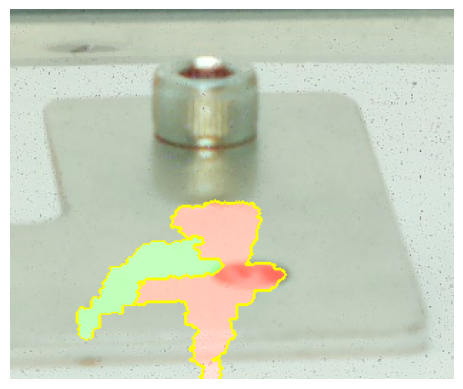

3. LIME Explanations

LIME highlights super-pixels that most heavily influenced the YOLOv8 prediction.

Challenges & Solutions

Challenge: Model Interpretation

Problem: YOLOv8’s complex architecture makes it difficult to extract traditional gradients for XAI. Solution: Implemented a hook-based system to capture activations from the final bottleneck layers.

Tech Stack Details

- Deep Learning: YOLOv8 (Ultralytics) for real-time detection.

- Explainability: Captum (LRP), LIME, and custom Grad-CAM implementations.

- Processing: OpenCV for image transformation and heatmap overlay.

Project Status: Completed